Tools vs Technique: The Capability Gap

By Anthea Roberts

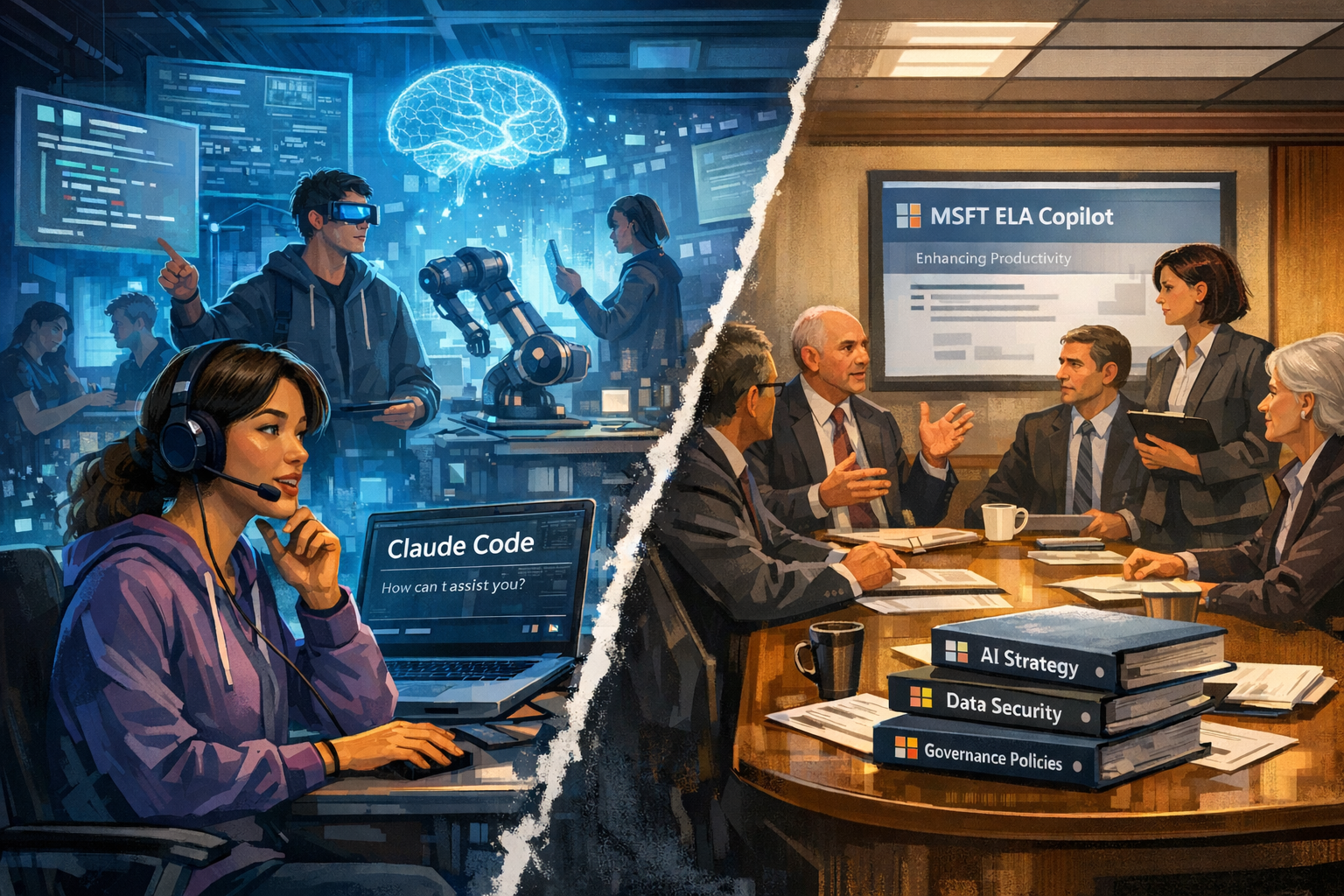

Sarah Guo, a venture capitalist who runs the AI-focused fund Conviction, posted something in February that I haven't been able to shake. "In order to have a sense of AI adoption right now," she wrote, "you really need to have one foot at the bleeding edge with the kids Wispr-ing through a mic at Devin all day and one foot in the 99% enterprise world where they're still debating the merits of leaving 'bundled-MSFT-ELA-Copilot.'"

I reposted it with eight words: "This is my life. The juxtaposition is disorienting."

I meant it literally. I run a small AI-native firm where my team and I talk to coding agents through voice dictation, run thirty or more AI sessions a day each, and have rebuilt essentially every workflow we have around human-AI collaboration. I also spend my weeks talking to large organisations — government agencies, major corporations — where smart, capable people are still trying to figure out how to use Copilot or whether to venture beyond it.

The hard work these organisations have done is real. They have governance frameworks, security protocols, data infrastructure, boards asking hard questions about AI strategy. They have built what I've started calling Level 1 adoption: the foundations. Responsible use policies. Automation of routine tasks. Summarise this document. Draft this email. Clean this dataset.

But here's the catch: that work is now becoming table stakes.

The value that changes what an organisation is actually capable of — the value most people haven't yet unlocked — is at Level 2: using AI to augment the complex knowledge work that drives differentiated business value. Level 1 automates the routine. Level 2 augments the hard stuff — the analysis, the synthesis, the judgement calls. It requires knowing how to build systems that structure how AI reasons through problems — agents, skills, workflows, context — and the judgement to orchestrate them.

The distance between Level 1 and Level 2 is large. Almost nobody has crossed it. The models are there. The tools are there. The reason is technique.

The AI conversation in the news and in social media is almost entirely about tools. Which model is best? Which platform wins? Should you use Claude or GPT or Gemini? But to me, that seems like the wrong question. The right question is: who has developed the technique to use whatever tools win?

Two people can sit down with exactly the same AI tool and get wildly different results. The difference is that one has spent months rebuilding how they work — rethinking their processes, developing AI fluency, learning through practice what the AI is good at and where it falls apart, and developing a machine-readable and accessible context layer. The other opened it up, tried a few prompts, got mediocre results, and concluded AI was overhyped.

The gap between those two people is not a tools gap. It is a technique gap. And that gap is widening, not closing. The models improve exponentially. Human technique improves linearly or in step changes. The distance between what AI can do and what most people know how to do with it grows wider every month.

There is a distribution question here that makes this problem genuinely hard. The technique — the embodied know-how of working with AI at Level 2 — currently resides almost entirely in AI-native startups. Small teams. People who have been building with these tools every day for months or years. People who have had the freedom to tear up their workflows and start over, to fail fast, to iterate, to develop intuitions that only come from sustained practice.

The Greeks had a word for this kind of knowledge: metis. It's the practical intelligence of the sailor who reads the water, the carpenter who feels when the wood is right. You can't learn it from a manual. You can't acquire it in a two-day workshop. You can't get it from a pilot programme that runs for six weeks with a carefully scoped use case. Metis comes from doing. From immersion. From time in the water. You can learn an awful lot from doing it alongside people who are really good but you have to be doing it in an applied way.

I know what developing this kind of metis feels like because I lived through it. I was already an avid AI user before any agents arrived on the scene. I had built complex chatbot instructions and workflows. I regularly used all three models — ChatGPT, Claude, Gemini - all of the time, feeding outputs from one into the others for second opinions. I had already stopped typing in favour of Wispr Flow months previously. I was not starting from zero.

What changed when I started working with Claude Code — an AI coding agent - was that it not only had a smart mind (the model) — but also had hands (the harness with tools). It could not just search the internet but retrieve documents and save them for me on my computer. It could reason over multiple rounds with searches and tool calls rather than replying straight away. It could find and edit files on my computer. If I wasn't careful, it could also delete important files on my computer (note: ask me how I know this)!

Claude Code was not a chatbot I was prompting. It was a collaborator I was co-creating with to achieve serious work outcomes. That distinction — mind with hands, and co-creation instead of chat — required a completely different way of working. I had to learn what to trust Claude Code with and what to check. I had to rebuild my filing systems so agents could read them. I had to develop judgement about when to direct and when to let it reason. That took months, not days.

Even in a small team that was ready and willing, the technique didn't spread on its own. I started out by explaining the really positive interactions I'd had with Claude Code encouraging (perhaps strongly encouraging) everyone to try it. We gave everyone access and initial training followed by multiple drop-in sessions a week for people to get help on how to connect various things and improve their techniques.

When encouragement didn't work for everyone, I eventually mandated the use of these agents. You can't have a tactile feel for how to build agents if you are not using them. I sat with people as they learned. I passed over tips, tricks and advice. Some converted quickly. Others needed weeks. Nearly everyone had an "ah ha" moment — but it required sustained, applied, supported practice. Not just a training session. Not just a direction from a CEO who did not live and breathe this herself.

And that's a team of fewer than ten people who already wanted to be there.

Which brings me back to the capability gap.

The organisations that most need this capability — the ones managing billions, the ones making decisions that affect millions of people, the ones with the most complex problems and the highest stakes — are precisely the ones where it is hardest to develop this sort of AI native fluency. They can't tear up their workflows. They have governance obligations, fiduciary duties, legacy systems, regulatory constraints, hundreds of employees who all need to be brought along. All of this makes it harder to develop AI fluency and drive real value change.

So you end up with this structural mismatch. The know-how resides in startups — small teams who have had the freedom to rebuild everything. But the urgent need for that know-how sits inside enterprises that can't rebuild anything without a business case, a risk assessment, and a change management plan. The bridge between them is not obvious.

The instinct of most large organisations is to solve this by buying software. Subscribe to a platform. Deploy a tool. But this is a Level 1 answer to a Level 2 problem. You can't buy technique. You can't subscribe to metis. You have to develop it yourself even if it makes an enormous difference to have people working alongside you to show you the way.

I've become increasingly convinced that the answer is something other than traditional SaaS style tools — what I've started calling capability transfer. The actual know-how of working with AI at the level that changes what's possible, transferred through embedding with teams, building together based on agent and workflow starter packs, and learning through applied work on real problems.

I genuinely don't know what the bridge looks like yet. Will enterprises end up acquiring AI-native startups not for their products but for their people and their practice? We have certainly seen that happening through the acqui-hire events over the last year in Silicon Valley. Will it require something that doesn't look like consulting or software — something more like capability transfer, where you learn by building alongside people who are already good at this?

We are increasingly seeing mixed software and service offerings, whether it is software firms with forward-deployed engineers or consulting firms with software capabilities. Both speak to trying to narrow this gap. Really good outcomes in AI come from combining the best tools with the best techniques and applying them to your own curated context.

A year ago, I was asking: which tools should we use? How do we get the most from this model? Now I'm asking: who has the capacity to really use these tools — and what made that come naturally for them? Who has capacity to learn at this extremely fast rate of change? What techniques can be learned and passed between groups? How do you develop metis in an organisation that can't afford to start over?

While most people are still fixated on the model comparison, I think that the bottleneck isn't the tool. The bottleneck is the human and organisational capacity to use the tools. And that bottleneck is going to define who actually captures the value of this technology — and who just talks about it.